Beyond Passive Viewing: A Hybrid Learning Platform Augmenting Video Lectures with Conversational AI

Mohammed Abraar, Raj Dandekar, Rajat Dandekar, Sreedath Panat

Master Generative AI and LLMs. Work on industry-level LLM projects. Publish LLM research papers. From foundations to RAG systems and multimodal models, build production-grade AI with Python and LangChain.

Instructors from

Research from our cohorts has been accepted, presented, and archived across leading venues in machine learning, scientific computing, and applied AI.

Large Language Models and GenAI are shifting from academic papers to foundational infrastructure for every industry. Here is why the 2024-2026 window matters.

Projected ML market by 2030, up from ~$55-75B in 2024 (CAGR above 30%), with GenAI emerging as a key industrial segment.

Market Research ›OpenAI's ChatGPT and LangChain have become the standard infrastructure for building LLM-powered applications across industries.

OpenAI ›Retrieval-Augmented Generation has become the default architecture for enterprise AI, combining LLMs with domain-specific knowledge bases.

RAG Architecture ›Companies like Google, Meta, and Amazon have dedicated GenAI divisions, and firms across industries are actively hiring LLM and prompt engineering specialists.

LinkedIn GenAI Jobs ›From RAG-powered chatbots to multimodal vision-language systems, this bootcamp equips you to build production-grade GenAI applications across industries.

We teach four interconnected pillars that form the foundation of modern Generative AI. Each represents a critical capability for building, deploying, and researching LLM-powered systems.

Understand the full LLM evolutionary tree, from BERT to GPT to Flan-T5. Learn to fine-tune models for classification, sentiment analysis, and topic modeling. Deploy models locally using Hugging Face and build practical pipelines with the ChatGPT API.

Master prompting fundamentals through advanced methods: in-context learning, Chain-of-Thought, and Tree-of-Thought reasoning. Build complex LLM applications using LangChain chains, memory modules, guardrails, and autonomous agents from scratch.

Build end-to-end Retrieval Augmented Generation pipelines. Learn dense retrieval with LLM embeddings, chunking strategies, reranking methods, and evaluation metrics (MAP/nDCG). Create robust, production-grade RAG chatbots for real-world use cases.

Explore Vision Transformers (ViTs), understand how they differ from CNNs, and learn vision-language models including CLIP, BLIP-2, and LLaVA. Build multimodal AI systems that bridge text, images, and structured data.

Learn to set constraints and safety measures for LLM outputs. Implement guardrails for quality control, understand model quantization (8/4-bit, GPTQ/AWQ) for efficient deployment, and build responsible AI systems.

Work on industry-level LLM research projects aimed at publication. Learn to formulate research problems, design experiments, validate hypotheses, and write scientific papers for conferences and journals.

Publication-quality diagrams illustrating the core systems you will master in this bootcamp.

A high-level overview of the four core pillars: LLM Foundations and Fine-Tuning, Prompt Engineering with LangChain, Semantic Search and RAG Systems, and Multimodal Language Models.

The complete Retrieval-Augmented Generation pipeline: document ingestion, chunking, embedding, vector storage, dense retrieval with cross-encoder reranking, and LLM-based answer generation.

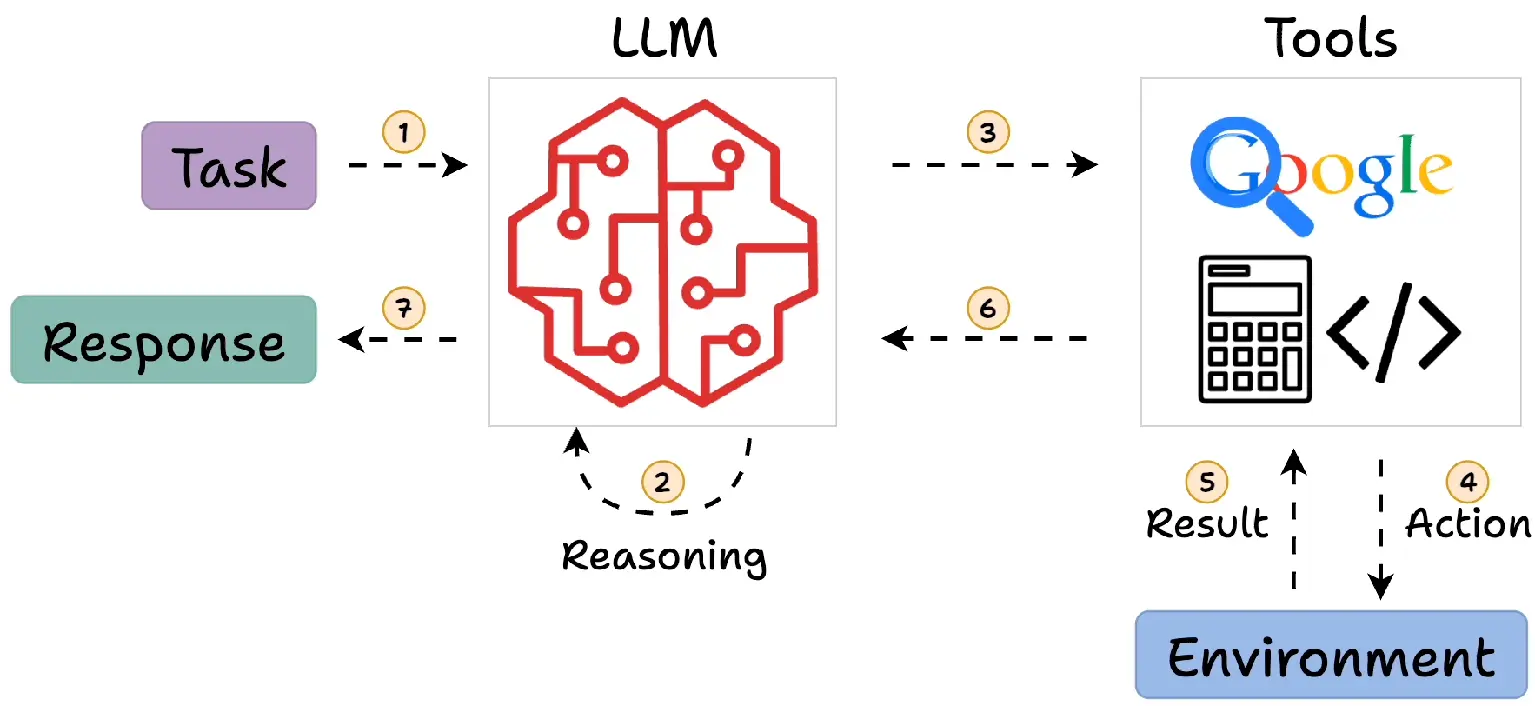

An LLM Agent built with LangChain showing the central reasoning engine, tool calling, short-term and long-term memory, chain orchestration, and the Observe-Think-Act reasoning loop.

Whether you come from computer science, engineering, data science, or any other field, this bootcamp teaches you to build and research with the latest Generative AI tools and techniques.

PhD students and postdocs who want to apply LLMs to their research domain, build RAG systems for literature analysis, or publish papers on Generative AI topics.

Developers looking to integrate LLMs, RAG pipelines, and AI agents into production applications using LangChain, Hugging Face, and modern LLM APIs.

ML practitioners who want to move beyond traditional models and build LLM-powered systems for text clustering, topic modeling, semantic search, and content generation.

Business leaders, product managers, and consultants who want to understand and leverage Generative AI for strategic decision-making and product development.

30 topics spanning LLM foundations, prompt engineering, RAG systems, LangChain agents, and multimodal models.

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Led by

Everything you need to go from GenAI beginner to building production-grade LLM systems and publishing research.

Production-ready Python code for every session, including LLM fine-tuning, RAG pipelines, LangChain agents, and multimodal applications.

Lifetime access to all session recordings and comprehensive lecture notes covering every Generative AI concept.

Industry-level GenAI projects including RAG systems, LLM agents, and multimodal applications ready for your portfolio or publication.

Join the Vizuara GenAI community on Discord for ongoing collaboration, doubt clearance, and research partnerships.

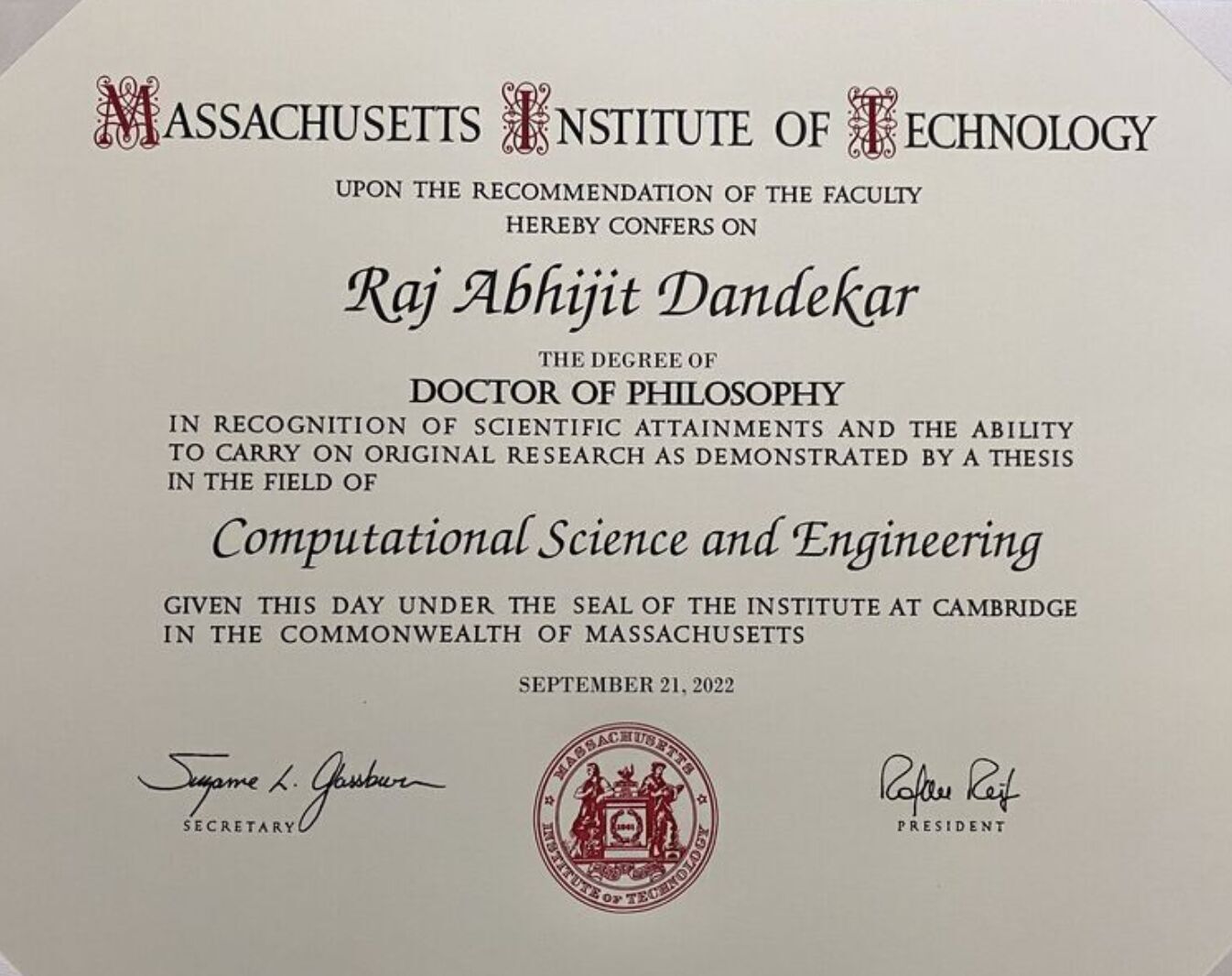

Our instructors are co-founders of Vizuara AI Labs and published researchers in AI and Machine Learning, with expertise spanning LLMs, scientific computing, and applied Generative AI.

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. Dr. Raj specializes in building LLMs from scratch, including DeepSeek-style architectures. His expertise spans AI agents, scientific machine learning, and end-to-end model development.

Co-founder, Vizuara AI Labs

PhD from Purdue University, B.Tech and M.Tech from IIT Madras. Dr. Rajat brings deep expertise in reinforcement learning and reasoning models, focusing on advanced AI techniques for real-world applications.

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. 10+ years of research experience. Dr. Panat brings deep technical expertise from both academia and industry to make complex AI concepts accessible and practical.

Manning #1 Best-Seller

Build a DeepSeek Model (From Scratch)

By Dr. Raj Dandekar, Dr. Rajat Dandekar, Dr. Sreedath Panat & Naman Dwivedi

Our lead instructor Dr. Raj Dandekar holds a PhD from MIT, where he conducted research at the Julia Lab under Prof. Alan Edelman and Chris Rackauckas. Our team brings deep expertise in LLMs, scientific computing, and applied AI research.

A few recent GenAI and LLM papers from our research over the past years. Students in the Industry Professional plan work on similar projects aimed at publication.

Milestones, acceptances, and moments shared by Vizuara students and alumni on LinkedIn.

Choose the plan that matches your goals, from self-paced learning to intensive research mentorship with MIT PhDs.

Save 25%. Originally Rs 40,000.

Save 17%. Originally Rs 1,50,000. MIT and Purdue PhDs as your research mentors.

Everything you need to know about the Generative AI Research Bootcamp.

Join hundreds of researchers and engineers who have built production-grade LLM systems and published AI research. Start building with the latest Generative AI tools and techniques.

Reach out to our team on email for any questions about the bootcamp, curriculum, or application process.

research@vizuara.com

If the email discussion goes well and we find the candidate genuinely interested in research, we also provide a 1-on-1 15-minute talk with our Lead AI Scientist, Prathamesh Joshi.

Prathamesh Joshi

Lead AI Scientist, Vizuara AI Labs

Prathamesh Joshi is a Lead AI Scientist at Vizuara AI Labs, with prior experience at the Max Planck Institute, Germany. His expertise spans Generative AI and Scientific Machine Learning, with a strong publication record across ICLR Workshops, IEEE conferences, and other top venues. He has also mentored students through intensive bootcamps, guiding them toward publications at NeurIPS Workshops, ICLR, JuliaCon, and AAAI Workshops.